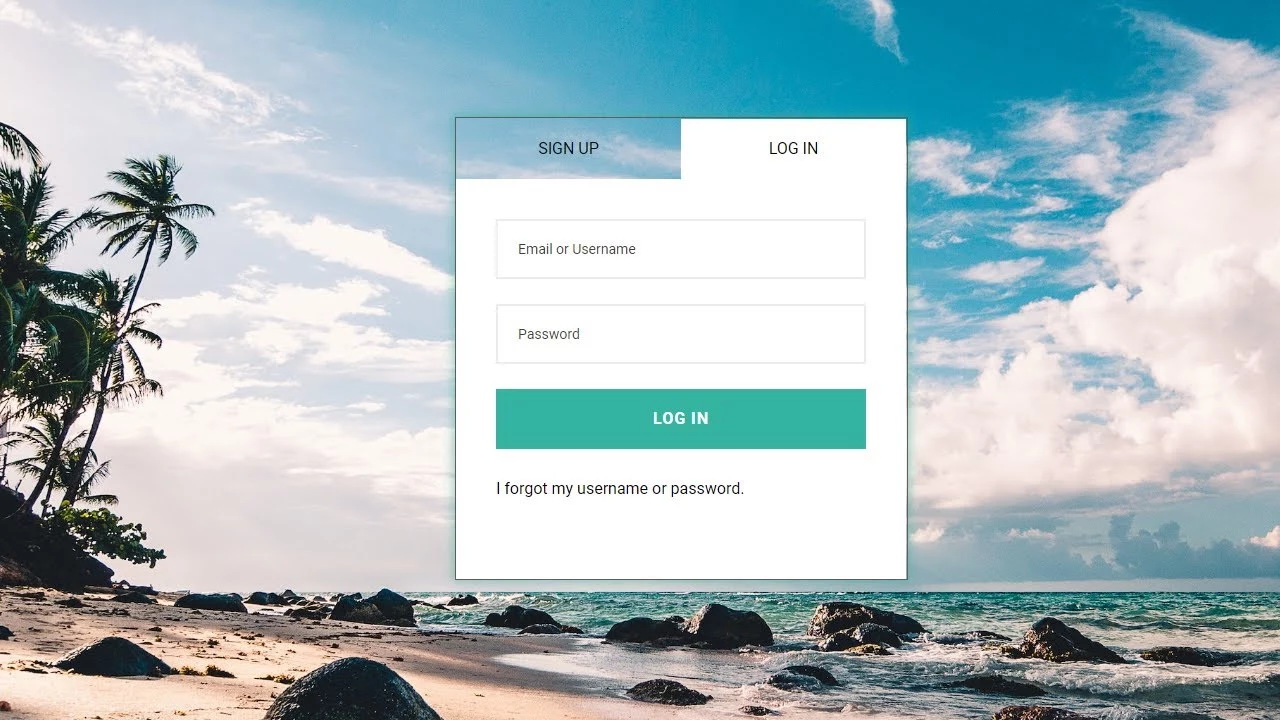

Login form design – SignUp form design free Tutorials | HTML – CSS -JavaScript

Backend Engineering: L-R-U Cash

Today we will know what is cashing and how L-R-U Cash works. If you already know about caching then you can read the first part quickly and move on to the next part.

Let’s say you are building an Android app. You have assigned 3 levels to the users of the app, Platinum, Gold and Silver. Whenever a user starts the app, you need to check the user’s level in your database. Your app has 100,000 users and thousands of users use the app every hour.

Once you see that database requests are increasing and page load is slow. The solution to this problem is to save some user levels in memory. Whenever a user starts the app, you first see if that user’s level is saved in memory, if so, you don’t need to send a request to the database. This process is called caching.

Caching is not always done for reading from the database. In computers, data is taken from the hard disk and kept in RAM for quick access, this is also a type of caching. Again, each CPU core caches some data to itself so that it does not have to read more than the RAM. You can understand that caching can be in many layers.

Now one problem always faced while doing caching is that cache memory size is much smaller than database hard disk. Maybe you have 5 terabytes of space on your hard disk, but your RAM size is 32 gigabytes. The problem is, not all data can be loaded into cache memory at once.

If the cache gets full while loading new data then some old data has to be discarded. This process is called Cache Eviction or Cache Eviction Policy. There are many types of algorithms for cash eviction, such as: FIFO (First in first out), FILO (First in last out), LRU (least recently used) etc.

Login form design – SignUp form free design Tutorials | HTML – CSS -JavaScript

LRU cache

Today we will see how LRU cache works and how to implement it. The LRU cache remembers the last time the data was accessed each time the data is accessed. If the cache becomes full while saving new data to the cache, it discards the outdated data. In this case, the earlier the data was accessed, the older it is (least recent).

Let’s do a small simulation. Think your cache memory size is only 3, that is, we can keep the data of only 3 users in the cache. Initially suppose Alice is logged in and you query the cache and see that Alice is not there. If you query the database and see that Alice is a platinum user, you cache that data. Check out the picture below:

Now another user Bob came who is also a gold user, add him to the cache:

Next came silver user John:

Meanwhile, what Bob did, opened another page in the app, which again queried the cache. Now that Bob’s status is in the cache, there will be no need to query the database. Meanwhile, since Bob’s status is the last accessed, he will be ‘most recent’, the order of the others will be the same:

Now if a fourth user Amy comes, what will happen? The oldest key will be dropped, in this case it is Alice.

While implementing cache we basically have to implement 2 basic functions. One is get(key) which takes a key as input and retrieves that key from the cache, another is put(key, value) which saves a key-value pair in the cache. So we will now see how I can implement these two functions for the LRU cache.

Algorithm 1: We can use a list/vector as a cache. Each position in the list (key, value) must be stored along with the last time it was accessed. I will replace all the old indexes by running a loop for the put operation. Find the key by looping through the get operation. Since the time complexity of both operations is O(n), we need something a little better.

Algorithm 2: Instead of List we can use a HashMap. As before, I will loop over the keys for the put operation and replace the old one in O(n) complexity. But the get operation will now work much faster, finding the key in hashmap in O(1) in average case. There has been some improvement.

Algorithm 3: The bottleneck of the previous algorithm was finding the oldest key. Which data structure is used to keep the keys sorted? We can use a balanced binary search tree. In that case both put and get will work in O(logn).

Algorithm 4: Both operations can be done in O(1) using doubly linked-lists. In today’s article, I will describe this algorithm in detail.

LRU cache using doubly linked-lists

I mentioned a solution using HashMap in Algorithm 2 above. By keeping the key-value pairs in the hashmap, we can find the key very quickly. But the problem was finding the oldest key. To solve that we will use Doubly linked-list. Each node in a doubly linked list points to its predecessor and successor. Take a look at the image below, it will help to explain:

Login form design – SignUp form free design Tutorials | HTML – CSS -JavaScript

I have now put my data in a linked list. Each node in the list is a key-value pair. The newest data will be near the left head of the list, the oldest data will be on the right. Also I will have a HashMap as before but this time the HashMap will only have the addresses of the nodes of the linked list instead of the values.

Now when a data comes then you have to find whether the data is already in the hashmap or not. If there is, then that node should be deleted from the linked list first. Then a new node should be placed to the left of the list. The same should be done when any data is queried because the data being queried is the newest data.

Deleting a node in a doubly linked list is very easy, just point the back node to the front node. And because of keeping the address of the node in the hashmap, the node can be found in O(1) average.

Then there is no need to loop through the oldest data for the Put operation. If the cache capacity is full then deleting the leftmost node is done in O(1).

This is possible.

Then we get an algorithm that is O(1)

A LRU can read-write data from the cache.

We can implement the code in C++. At the beginning I will define the node class of the linked list:

Implementing the linked list is the hard part of this code. The main logic becomes easier if we create a LinkedList class. We need methods to insert nodes to the left of the linked list and delete nodes from the list.

With our basic structure ready, let’s implement the cache:

If you follow the code step by step you will understand how it works. If you want to practice then you can submit your code to litcode. LRU Cash is a very common interview question.

In practice you may never need to implement this, most caching mechanisms support the LRU method, such as Redis. Cache data has some other properties, such as TTL or Time to live, which defines how long a data will expire on its own. You can try to figure out how to implement it!

If the cache data is not available in the memory of a server, then the data has to be spread over multiple servers using distributed caching technique, which is a bit more complicated, in that case you need to know a technique called consistent hashing, I have discussed it in another article.

Happy Coding!

Before Download

You must Join our Facebook Group and Subscribe YouTube Channel

All Links in Below:

Join Our FreeWebsiteCreate Facebook Group to get an instant update for projects, templates, design resources, and solutions.

Join Our YouTube Channel & Subscribe with Bell Icon for New Video:

Join Our Official Facebook Page For the Latest updates All Code Projects are Free:

Visit our service page to get premium services.

Free Website Create – HTML CSS, PHP, JavaScript Programming Projects For Free

Follow Us

Thank You,

Before Download

You must Join our Facebook Group and Subscribe YouTube Channel

FreeWebsiteCreate.net tries to provide HTML, CSS, SCSS, JavaScript, React, Android Studio, Java, PHP, Laravel, Python, Django, C#(C Sharp), and ASP.net-related projects 100% free. We try to make learning easier. Free Website Create always tries to give free projects to new learners. Free projects and source code will help to learn quickly.

They can save time and learn more. In this post, we share a free portfolio project website code with HTML and CSS. This free code portfolio contains a single landing page with a responsive design. In this post, we get a free best carpenter and craftsman service website designed by FreeWebsiteCreate with HTML, CSS, Bootstrap, and JavaScript.